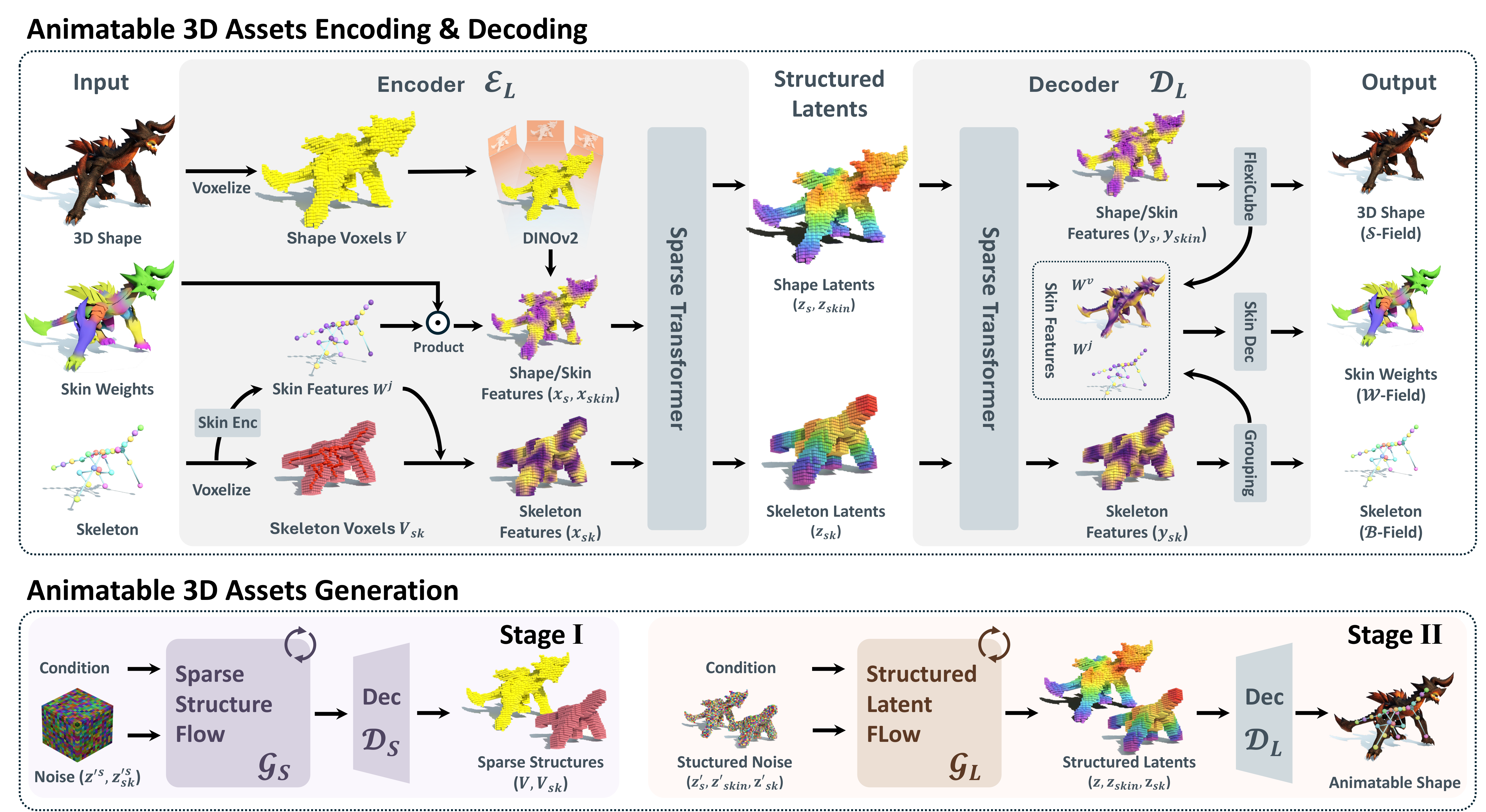

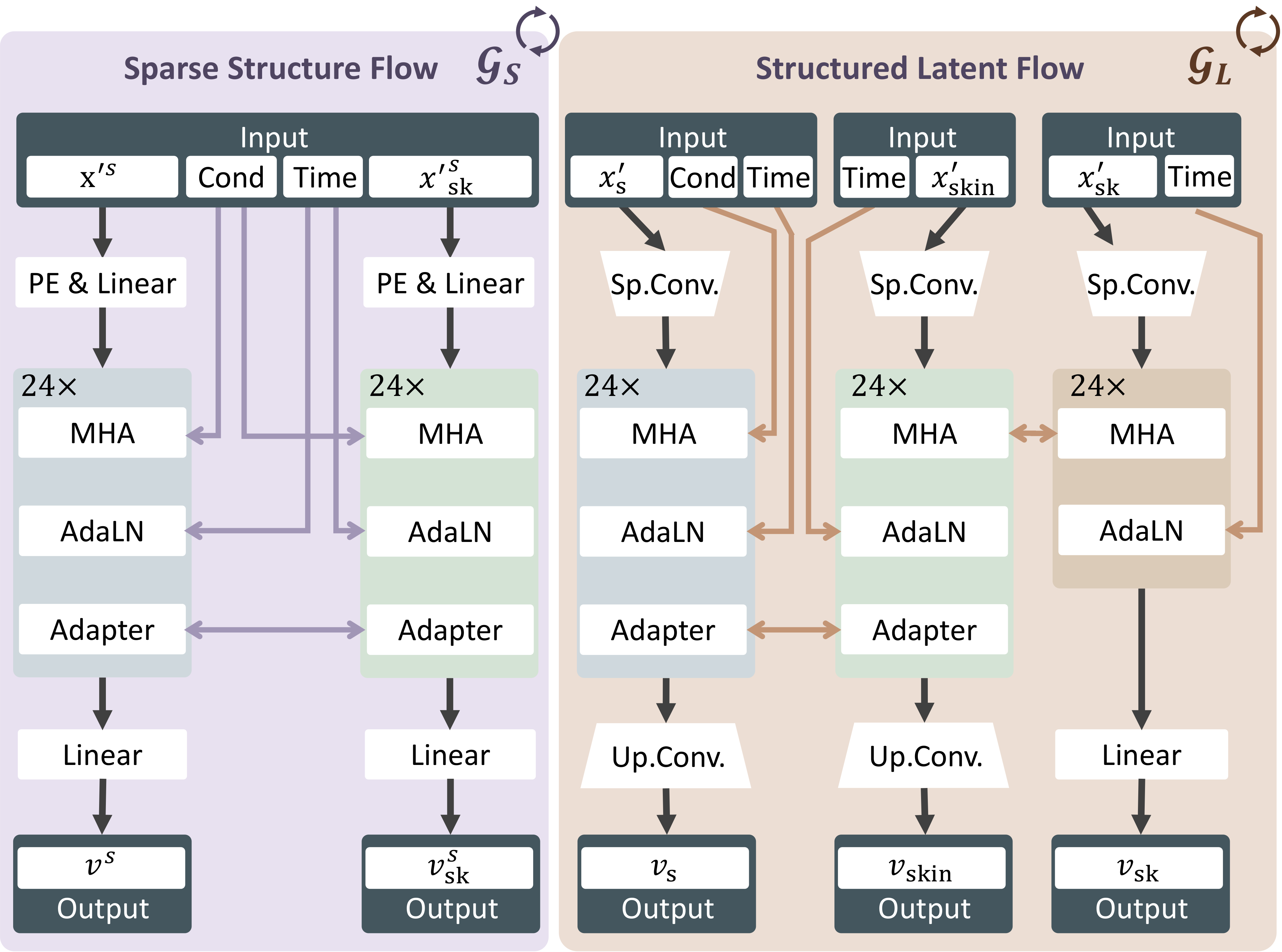

Animatable 3D assets, defined as geometry equipped with an articulated skeleton and skinning weights, are fundamental to interactive graphics, embodied agents, and animation production. While recent 3D generative models can synthesize visually plausible shapes from images, the results are typically static. Obtaining usable rigs via post-hoc auto-rigging is brittle and often produces skeletons that are topologically inconsistent with the generated geometry. We present AniGen, a unified framework that directly generates animate-ready 3D assets conditioned on a single image. Our key insight is to represent shape, skeleton, and skinning as mutually consistent S3 Fields (Shape, Skeleton, Skin) defined over a shared spatial domain. To enable the robust learning of these fields, we introduce two technical innovations: (i) a confidence-decaying skeleton field that explicitly handles the geometric ambiguity of bone prediction at Voronoi boundaries, and (ii) a dual skin feature field that decouples skinning weights from specific joint counts, allowing a fixed-architecture network to predict rigs of arbitrary complexity. Built upon a two-stage flow-matching pipeline, AniGen first synthesizes a sparse structural scaffold and then generates dense geometry and articulation in a structured latent space. Extensive experiments demonstrate that AniGen substantially outperforms state-of-the-art sequential baselines in rig validity and animation quality, generalizing effectively to in-the-wild images across diverse categories including animals, humanoids, and machinery.

Each row shows one subject across six viewpoints. The last three columns overlay the generated skeleton.

Dog

Dog

Eagle

Eagle

Horse

Horse

Bird

Bird

Whale

Whale

Iron Boy

Iron Boy

Evo

Evo

Mairo

Mairo

Child

Child

BrickBob

BrickBob

Bruno Star

Bruno Star

Woman

Woman

Machine Arm

Machine Arm

Machine Hand

Machine Hand

Macbook

Macbook

Plant

Plant

Machine Dog

Machine Dog

Money Tree

Money Tree

AniGen vs. state-of-the-art auto-rigging baselines. Second row shows skeleton overlay.

AniGen co-generates shape, skeleton, and skinning through unified S3 Fields. We introduce a confidence-decaying skeleton field to handle geometric ambiguity at bone prediction boundaries, and a dual skin feature field that enables joint-count agnostic skinning. A two-stage flow-matching pipeline first synthesizes sparse structure, then generates dense geometry and articulation.

@article{huang2026anigen,

title={AniGen: Unified $ S\^{} 3$ Fields for Animatable 3D Asset Generation},

author={Huang, Yi-Hua and Zou, Zi-Xin and He, Yuting and Chang, Chirui and Pu, Cheng-Feng and Yang, Ziyi and Guo, Yuan-Chen and Cao, Yan-Pei and Qi, Xiaojuan},

journal={arXiv preprint arXiv:2604.08746},

year={2026}

}